New York DEFIANCE Act and Deepfake Intimate Image Lawyer

If someone created or distributed AI-generated intimate images of you without your consent, you have legal options right now. Federal law criminalizes the knowing publication and distribution of such content through online platforms. New York state law gives you a private civil cause of action where the statutory requirements are met. And the DEFIANCE Act, a new federal civil remedy, passed the United States Senate unanimously in January 2026 and is moving through Congress.

This area of law is moving fast. Victims who act quickly, preserve evidence, and retain experienced counsel are in a far stronger position than those who wait.

At Veridian Legal P.C., we represent victims of AI-generated deepfake intimate imagery, non-consensual image-based abuse, and related online exploitation in New York. We pursue takedowns, civil claims, and injunctive relief. If this is happening to you, call us at (212) 706-1007. The consultation is free.

What Is a Deepfake Intimate Image?

A deepfake intimate image is a fabricated visual depicting a real person in a sexualized or nude context, generated through artificial intelligence, machine learning, or other software, without the depicted person's consent. The person in the image is real. The image itself is manufactured.

These images are not a niche problem. AI tools that generate explicit content from a single clothed photograph of a person are widely available and require no technical skill to operate. Victims include teenagers, college students, employees, public figures, and anyone whose image appears online. Once a deepfake is created, it spreads quickly across platforms, messaging apps, and file-sharing networks. The reputational, professional, and psychological harm can be severe and lasting.

You do not need to prove the image was distributed widely. In some cases a deepfake created by a single person and sent to one other person is enough to support a legal claim.

Federal Law: What Is the DEFIANCE Act?

DEFIANCE stands for Disrupt Explicit Forged Images and Non-Consensual Edits. It is a piece of federal legislation specifically designed to give victims of AI-generated intimate imagery a civil cause of action in federal court.

The Senate passed the DEFIANCE Act unanimously on January 13, 2026. The bill is now pending in the House of Representatives, where it has bipartisan support. Once signed into law, it will allow victims to sue anyone who knowingly produces, distributes, solicits, or possesses with intent to distribute an intimate digital forgery of an identifiable person without that person's consent.

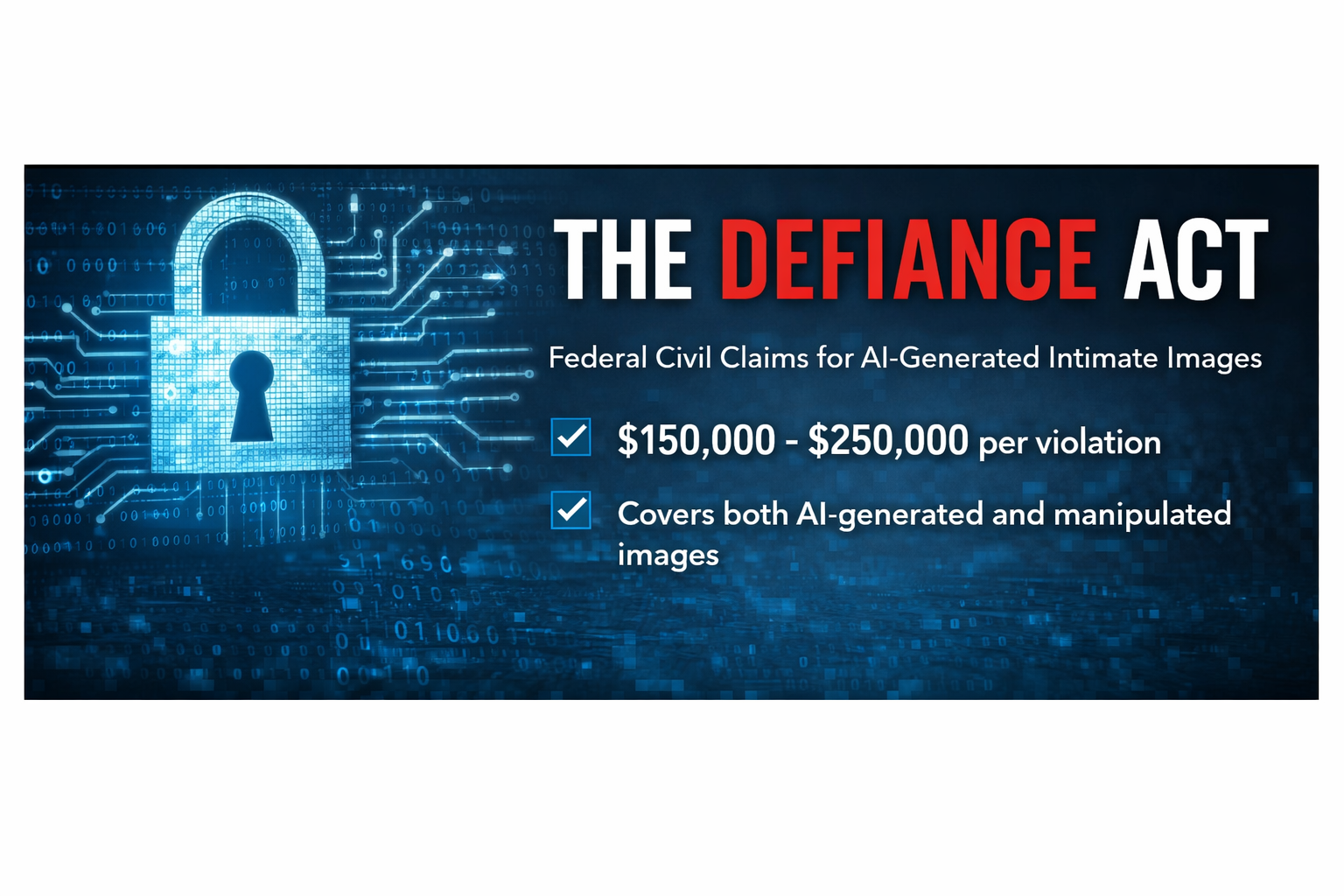

What the DEFIANCE Act Would Allow Victims to Recover

The current version of the DEFIANCE Act provides for liquidated damages of $150,000 per violation. If the deepfake was created in connection with sexual assault, stalking, or harassment of the victim, or was the direct cause of such conduct by any person, liquidated damages increase to $250,000. Victims may also recover actual damages, including any profits the defendant made from the content, plus attorney's fees and costs.

The ten-year statute of limitations runs from the date the victim reasonably discovers the images, or from the victim's eighteenth birthday if the victim was a minor when the images were created.

The DEFIANCE Act Covers Both AI-Generated and Manipulated Images

The law applies to any visual depiction created through artificial intelligence, machine learning, software, or other technological means to falsely appear authentic, including nude or sexually explicit depictions of a real identifiable person who did not consent. This covers both fully fabricated images and real photographs that have been digitally altered to remove clothing or add explicit content.

Federal Law Already in Effect: The TAKE IT DOWN Act

Even before the DEFIANCE Act becomes law, federal law already addresses deepfake intimate imagery. The TAKE IT DOWN Act was signed by President Trump on May 19, 2025. It takes two concrete steps.

First, it makes it a federal crime to knowingly publish non-consensual intimate images, including AI-generated deepfakes, through an interactive computer service. The criminal prohibition covers publication and certain threats of disclosure. It is not a blanket ban on private creation in every circumstance, but it establishes that the federal government treats the distribution of this content as a serious federal offense.

Second, it requires covered platforms to implement a notice-and-removal process. The statute defines covered platforms and includes exclusions, so not every website is subject to the same obligations. Where the law applies, once a victim submits a valid notice, the platform must remove the content within 48 hours. This is a critical tool for victims dealing with content that has spread to multiple sites.

We use the TAKE IT DOWN Act as part of a broader takedown strategy alongside civil claims, injunctive relief, and direct platform reporting.

New York State Law: You Can Sue Right Now

You do not have to wait for the DEFIANCE Act to become law to take civil action in New York. New York Civil Rights Law section 52-b already provides a private cause of action that covers intimate images created or altered through digitization, including by artificial intelligence and machine learning.

To bring a claim under section 52-b, the defendant must have disseminated or published, or threatened to disseminate or publish, an intimate image of the victim. The statute also requires that the defendant acted for the purpose of harassing, annoying, or alarming the victim, and that the image depicted the victim under circumstances where the victim had a reasonable expectation that it would remain private. The law expressly covers digitally created or altered images, and its definition of digitization includes images produced using software, machine learning, artificial intelligence, or any other computer-generated means. Courts have begun applying the statute to digitally created or altered images, including AI-generated content, where the statutory elements are otherwise satisfied.

What You Can Recover Under New York Law

Under New York Civil Rights Law section 52-b, a prevailing plaintiff can recover compensatory damages, including damages for mental anguish, as well as punitive damages, reasonable attorney's fees, and court costs. Injunctive relief, meaning a court order requiring the defendant to take down the content and stop all further distribution, is available and is often the most urgent form of relief we seek for clients.

The statute of limitations under section 52-b requires that a claim be filed no later than the later of three years from the date of dissemination or publication, or one year from the date the victim discovered the images or reasonably should have discovered them. If you recently found out about content that was posted years ago, the discovery rule may preserve your ability to bring a claim. Call us to assess the timing in your specific situation.

Who Can You Sue?

Civil liability for deepfake intimate imagery can attach to multiple parties depending on the facts.

The person who disseminated or threatened to disseminate the image. New York Civil Rights Law section 52-b is a dissemination-based statute: the civil claim attaches to the publication, distribution, or threatened disclosure of the image, not to mere creation alone. In many cases the person who creates the image also distributes it, but the key act that triggers civil liability under current New York law is the dissemination. Under the DEFIANCE Act, once enacted, production itself will also be actionable at the federal level.

The person who distributed or shared it. Under both New York law and the DEFIANCE Act framework, distribution without consent is independently actionable. A person who receives a deepfake and sends it to others can face civil liability even if they did not create it.

The person who solicited or commissioned it. Someone who paid for or requested the creation of a deepfake of another person can be held liable as well.

In some cases, multiple defendants are involved, and claims can be pursued against each of them.

What If You Do Not Know Who Created the Image?

One of the most common questions victims ask is: what can I do if I do not know who made this?

New York courts allow civil suits to be filed against anonymous defendants, commonly identified as John Doe or Jane Doe, when the identity of the defendant is unknown. Once a lawsuit is filed, we can use the discovery process to subpoena platform records, IP address logs, payment records, and account information from the sites and services involved. Courts in New York apply a heightened standard before compelling disclosure of an anonymous defendant's identity, but that standard can be met when a plaintiff presents a real and viable claim.

We have experience navigating anonymous defendant proceedings. The fact that the person who created or distributed the image has not identified themselves does not mean civil action is off the table.

Evidence to Preserve Right Now

If you are dealing with AI-generated intimate images of yourself, there are steps you can take today that will strengthen any future legal action.

Do not delete anything. Screenshots of the images, the platforms they appear on, the URLs, usernames or handles, profile information, any messages you have received, and any responses you get from platforms to takedown requests should all be preserved. Deletion of this material, even with good intentions, can make the case harder to prosecute.

Take timestamped screenshots. The date and time a screenshot was taken can matter in establishing when you discovered the images and in calculating the statute of limitations.

Document the spread. If the images appear on multiple platforms or in multiple locations, document each one separately. Note whether the same username or account appears in multiple places.

Preserve any communications. If anyone sent you a link to the images, threatened to share them, or communicated with you about them, preserve those messages completely. Do not respond to the sender without speaking to an attorney first.

Do not contact the person you believe created the images. Reaching out can alert them, give them time to delete evidence, or create communications that complicate the case.

Call us. We can issue preservation demands to platforms and, in some cases, obtain emergency injunctive relief to stop distribution before the case is fully filed.

How Quickly Can Content Be Taken Down?

Speed matters enormously in these cases. The longer deepfake content remains accessible, the more it spreads and the harder it becomes to contain.

Under the TAKE IT DOWN Act, covered platforms are required to remove reported intimate images within 48 hours of receiving a valid notice. We send these notices on behalf of clients and follow up on compliance.

Beyond the TAKE IT DOWN Act, most major platforms have separate policies against non-consensual intimate imagery, including AI-generated content. We know how to navigate each platform's reporting systems and how to escalate when initial reports are ignored.

For content that persists on smaller or less cooperative sites, we pursue injunctive relief through the courts. A court order requiring takedown carries legal weight that a platform report alone does not.

Deepfake Intimate Image Cases: Who We Represent

The range of people targeted by deepfake intimate imagery is wide. The following are situations we see regularly.

People targeted by ex-partners or former acquaintances. In many cases, someone who had access to photographs of the victim, usually through a prior relationship, uses AI tools to create fabricated intimate content. This often occurs in the context of a breakup, a workplace conflict, or a dispute of another kind.

People targeted by strangers online. Public-facing social media profiles, professional headshots, and any photograph that appears online can become source material for deepfake tools. Victims are sometimes targeted by people they have never met.

Minors. AI-generated intimate images involving minors raise additional legal issues under federal child exploitation statutes. If you are a parent who has discovered that your child is the subject of this kind of imagery, contact us immediately.

Professionals and public figures. Executives, healthcare workers, educators, politicians, journalists, and others whose professional reputations are tied to their image are frequently targeted. The professional and reputational damage from deepfake content can be severe and rapid.

People who discovered images during a background check, job application process, or through a third party. In some cases victims do not discover the images themselves. A friend, a family member, or an employer surfaces the content. This does not affect your legal rights.

What About the Platforms That Host the Content?

Platform liability under federal law is complex. Section 230 of the Communications Decency Act has historically shielded platforms from civil liability for user-posted content. However, this protection has limits, and the TAKE IT DOWN Act creates mandatory removal obligations that platforms must meet.

We focus our civil claims on the individuals responsible for creating and distributing the content, where liability is clearest and damages are strongest. At the same time, we use every available tool to compel platform removal, including TAKE IT DOWN Act notices, DMCA takedowns where a copyright interest exists, and platform-specific reporting channels.

What It Costs

We discuss fees directly in the first consultation. We offer free initial consultations, and we are straightforward about what a realistic case strategy looks like in your situation.

Many victims are concerned that a lawsuit is too expensive to pursue, particularly against an unknown defendant or someone with limited assets. We assess the full picture in every case, including the availability of damages, the feasibility of anonymous defendant discovery, and whether emergency injunctive relief is appropriate as a first step before full litigation. We do not string cases along. We tell you in the first conversation what the realistic options are.

Frequently Asked Questions

Is it illegal to create a deepfake of someone in New York?

Distributing or threatening to distribute AI-generated sexually explicit content depicting a real person without their consent can violate both federal and New York law depending on the circumstances. Under the TAKE IT DOWN Act, knowingly publishing such content through an interactive computer service is a federal crime. Under New York Civil Rights Law section 52-b, a victim whose intimate image was disseminated or threatened to be disseminated for the purpose of harassing, annoying, or alarming them has a civil cause of action, and the statute expressly covers images created or altered through artificial intelligence.

Can I sue someone for making a deepfake of me even if it has not been widely distributed?

Yes. New York law does not require wide distribution. Even a deepfake created by one person and shared with a small number of people can support a civil claim, particularly where the conduct was intentional and caused harm.

What is the DEFIANCE Act and is it law yet?

The DEFIANCE Act is federal legislation that would create a civil cause of action for victims of AI-generated intimate imagery. The Senate passed it unanimously on January 13, 2026. As of March 2026, it is pending a vote in the House. It is not yet signed into law, but the prospects for passage are strong given unanimous bipartisan Senate support.

What damages can I recover?

Under New York Civil Rights Law section 52-b, you can recover compensatory damages including mental anguish damages, punitive damages, attorney's fees, and court costs. Injunctive relief to require removal and prohibit further distribution is also available. Once the DEFIANCE Act becomes law, federal claims will add $150,000 to $250,000 in liquidated damages plus attorney's fees and costs on top of any state law recovery.

What if I do not know who made the image?

You can still file a civil lawsuit against an anonymous defendant. The discovery process can be used to subpoena platform records, IP addresses, and account data to identify the person responsible. Courts in New York allow this process where the plaintiff has a viable underlying claim.

How quickly can the images be taken down?

Under the TAKE IT DOWN Act, covered platforms must remove reported intimate images within 48 hours of receiving a valid notice. For platforms that do not comply, or for smaller sites outside the TAKE IT DOWN Act's coverage, we pursue injunctive relief through the courts, which can result in an enforceable removal order.

What is the statute of limitations for a deepfake lawsuit in New York?

Under New York Civil Rights Law section 52-b, a claim must be filed no later than three years from the date the images were disseminated or published, or one year from the date you discovered them or reasonably should have discovered them, whichever is later. If you found out recently about content that was posted some time ago, the discovery rule may still preserve your claim. Do not assume the time has passed without speaking to an attorney first.

Does the law apply to images created using AI from a clothed photograph?

Yes. Both the DEFIANCE Act and New York state law cover images that are digitally altered or AI-generated to depict a person in a nude or sexually explicit context, regardless of whether the original source photograph was intimate.

What if I am a minor, or the victim is a minor?

If the victim is under 18, additional federal laws apply, including federal child exploitation statutes. These cases should be treated as urgent. Call us immediately at (212) 706-1007.

Contact Our New York Deepfake Image Lawyers

If you are the subject of AI-generated intimate imagery, do not wait and do not handle it alone. Call Veridian Legal P.C. at (212) 706-1007 or email info@veridianlegal.com. The consultation is free and confidential. You will speak directly with an attorney.

We will assess your situation, advise you on what evidence to preserve, and tell you directly what your legal options are under New York law, the TAKE IT DOWN Act, and the pending DEFIANCE Act. If emergency injunctive relief is available to stop the spread of content immediately, we will tell you that in the first conversation.

Contact Us

Free Consultation

212-706-1007

Practice Areas

The attorneys at Veridian Legal have years of experience representing abuse victims and those who have suffered personal injury. As your lawyers we will fight tirelessly for the justice and compensation you deserve.

Our victims’ rights practice areas include:

Our Consultations Are Different:

Free: No cost, no obligations.

Experienced: Speak directly with an experienced revenge porn lawyer, not a paralegal.

Image Report: We'll provide a free image spread report using facial recognition technology.

Honest Assessment: We'll discuss your case's strengths and weaknesses and figure out a plan of action that’s right for you.

Your Voice Matters: Support for Survivors

Contact a Revenge Porn Lawyer Today

Revenge porn can have a devastating emotional impact on victims. If you're struggling with emotional distress due to this harmful act, don't hesitate to reach out to our experienced revenge porn lawyers at Veridian Legal. We're here to help you understand your rights, seek justice, and begin the healing process.

Meet the Team

-

Cali Madia

PARTNER

-

Daniel Szalkiewicz

PARTNER

Client Reviews